What is workload identity?

Applications that run on Kubernetes often need to access other services – and if you are running on Google’s cloud, it is likely they will have to access some Google Cloud Services (databases, or storage, or AI services, because everything is AI now!) This brings up the issue of how a Kubernetes application can securely access such cloud services. You certainly don’t want to hard code your user access credentials into your app!

Workload Identity associates a Kubernetes Service Account to Cloud IAM service accounts, such that the applications can access cloud resources using their Kubernetes identity securely. This eliminates the need for long lived credentials. Workload identity uses the following features of Kubernetes:

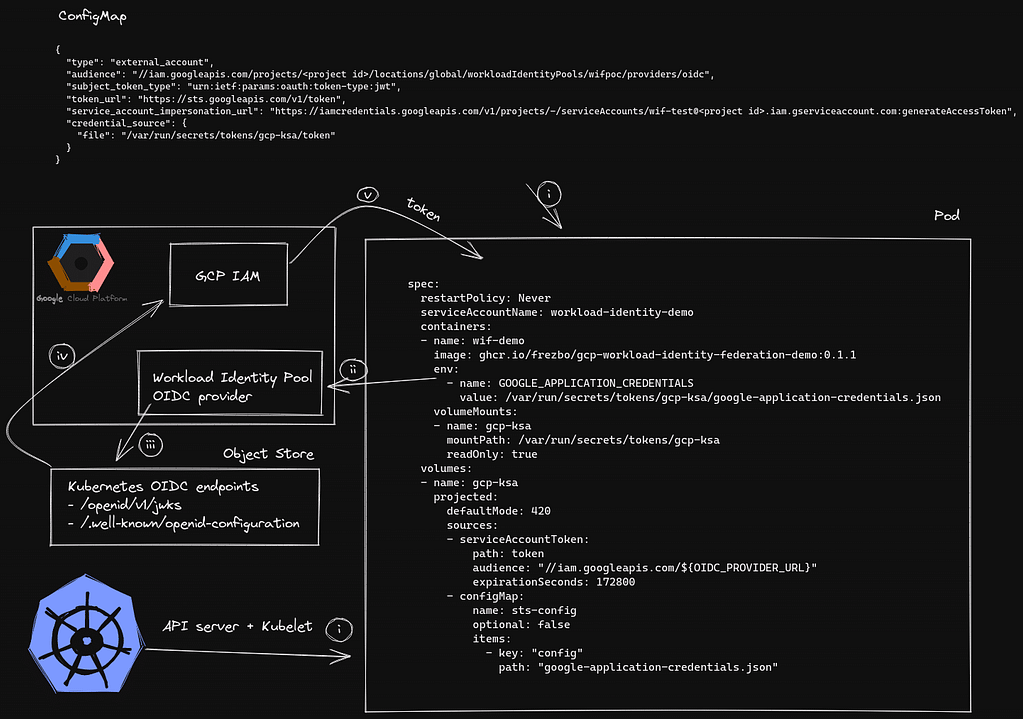

High level overview

The application that needs to talk to the Google Cloud API refers to a Kubernetes config map which stores the information about the Google Cloud IAM Workload Identity Pool OIDC Provider. (OIDC is OpenID Connect – an identity layer on top of the OAuth 2.0 protocol.) The config map, along with a Kubernetes projected service account token with an audience for the Workload Identity Pool OIDC Provider, is mounted into the application pod.

The Kubernetes API server is configured with the Google Cloud IAM Workload Identity Pool OIDC Provider url as the api-audiences. The OIDC validation keys and the OIDC discovery document are retrieved from the Kubernetes api server and stored in a GCP bucket so that the Google Cloud IAM service can validate that the request and the audience matches the associated Kubernetes Cluster.

When the application needs to authenticate to Google Cloud IAM, it first goes via the Workload Identity Pool OIDC Provider which is configured to query the Google Cloud bucket and validate the request. Once the request is validated and the corresponding Google service account has permissions to assume a workload identity, a token is issued to the workload. The workload uses this token to authenticate to Google cloud IAM.

Setting up workload identity on a Talos Cluster in GCP

While the steps outlined below are specific to a Talos Kubernetes Cluster running in GCP, this can be adapted to a Kubernetes cluster running anywhere, even a local KIND cluster. (One of the many advantages of Talos Linux for your Kubernetes clusters is that the same immutable Kubernetes specific operating system runs on all platforms – bare metal, Raspberry Pi, local docker/qemu, GCP, AWS, Hyper-V, etc – so you don’t need to adapt your processes for different environments. They will just work.)

Start by following the Talos docs on setting up a cluster in GCP.

Once the cluster is deployed and kubeconfig, talosconfig and the outputs for the cluster is retrieved we can proceed with the next steps.

In GCP we use Workload Identity Pool to authorize a specific Kubernetes SA to access the contents of the bucket created in the previous cluster deployment step as an example.

First start by creating a Kubernetes Service Account that a Kubernetes workload can use:

kubectl \

--kubeconfig kubeconfig \

--namespace default \

create sa workload-identity-demo

Next we need to create a Google IAM workload identity pool and a workload identity pool provider:

gcloud iam workload-identity-pools create \

workload-identity-demo \

--location="global" \

--description="Workload Identity demo" \

--display-name="Workload Identity Demo" \

--project "${PROJECT}"

Now we will proceed with creating an OIDC provider for the workload identity pool created above. Notice here that we set a custom attribute named sub which will map to the sub of the OIDC request and the issuer URL is set to the GCS bucket.

gcloud iam workload-identity-pools providers create-oidc \

wif-demo-oidc \

--workload-identity-pool="workload-identity-demo" \

--issuer-uri="https://storage.googleapis.com/${BUCKET_NAME}" \

--location="global" \

--attribute-mapping="google.subject=assertion.sub,attribute.sub=assertion.sub"

Now we need to retrieve the url for the OIDC provider

OIDC_PROVIDER_URL=$(gcloud iam workload-identity-pools providers list --location="global" --workload-identity-pool="workload-identity-demo" --filter="name:wif-demo-oidc" --format json | jq -r '.[0].name')

Now we will patch the Talos Kubernetes cluster api-server to use this OIDC provider as api-audiences alongside the default API server audience. Talos by default generates ECDSA keys for Kubernetes service account verification which don’t work with Google’s IAM Workload Identity Pool OIDC provider. Instead, we need to generate an RSA key and replace the default service account signing key

RSA_KEY_ENCODED=$(openssl genrsa 4096 2> /dev/null | base64 -w 0)

# first get the IP's of control plane nodes

CONTROL_PLANE_NODE_ADDRESSES=$(kubectl --kubeconfig kubeconfig get nodes --output json | jq -r '.items[] | select(.metadata.labels."node-role.kubernetes.io/control-plane" == "").status.addresses[] | select(.type == "ExternalIP").address')

# now patch each control plane node

for NODE_ADDRESS in ${CONTROL_PLANE_NODE_ADDRESSES}; do

talosctl \

--talosconfig talosconfig \

--nodes "${NODE_ADDRESS}" \

patch mc \

--immediate \

--patch "[{\"op\": \"add\", \"path\": \"/cluster/apiServer\", \"value\": {\"extraArgs\": {\"api-audiences\": \"https://kubernetes.default.svc.cluster.local,iam.googleapis.com/${OIDC_PROVIDER_URL}\", \"service-account-issuer\": \"https://storage.googleapis.com/${BUCKET_NAME}\", \"service-account-jwks-uri\": \"https://storage.googleapis.com/${BUCKET_NAME}/keys.json\"}}}, {\"op\": \"add\", \"path\": \"/cluster/serviceAccount\", \"value\": {\"key\": \"${RSA_KEY_ENCODED}\"}}]"

done

Now we need to retrieve the Kubernetes cluster OIDC keys.json and discovery.json config and upload to the GCS object store.

kubectl \

--kubeconfig kubeconfig \

get --raw '/openid/v1/jwks' | \

gsutil \

-h "Cache-Control:public, max-age=0" \

-m cp -a public-read \

-I "gs://${BUCKET_NAME}/keys.json"

kubectl \

--kubeconfig kubeconfig \

get --raw '/.well-known/openid-configuration' | \

gsutil \

-h "Cache-Control:public, max-age=0" \

-m cp -a public-read \

-I "gs://${BUCKET_NAME}/.well-known/openid-configuration"

Now we can proceed to create a Google IAM service account that will be used to authenticate to Google Cloud IAM:

gcloud iam service-accounts create wif-demo-sa

We need to get the full path of the workload identity pool created previously:

WORKLOAD_IDENTITY_POOL_URL=$(gcloud iam workload-identity-pools list --location="global" --filter="name:workload-identity-demo" --format json | jq -r '.[].name')

Now we can add a IAM policy binding to the above created Google IAM Service account.

Here we bind the Kubernetes Service Account named workload-identity-demo to be able to access the Google Service Account wif-demo-sa:

gcloud iam service-accounts add-iam-policy-binding \

"wif-demo-sa@${PROJECT}.iam.gserviceaccount.com" \

--role roles/iam.workloadIdentityUser \

--member "principalSet://iam.googleapis.com/${WORKLOAD_IDENTITY_POOL_URL}/attribute.sub/system:serviceaccount:default:workload-identity-demo"

Now we can proceed to attach a role to the Google IAM service account that will give it Storage Admin access on the google project:

gcloud projects add-iam-policy-binding \

"${PROJECT}" \

--member "serviceAccount:wif-demo-sa@${PROJECT}.iam.gserviceaccount.com" \

--role roles/storage.admin

Now we can move onto creating a demo deployment to test if the access works correctly, but before that we need to retrieve the config file to be used for authenticating as the Google service account and save it as a kubernetes config map:

gcloud iam workload-identity-pools create-cred-config \

"${OIDC_PROVIDER_URL}" \

--service-account="wif-demo-sa@${PROJECT}.iam.gserviceaccount.com" \

--credential-source-file="/var/run/secrets/tokens/gcp-ksa/token" \

--output-file sts-creds.json

kubectl \

--kubeconfig kubeconfig \

--namespace default \

create configmap \

sts-config \

--from-file=config=sts-creds.json

Now we will deploy a simple app that lists the name of all GCS buckets in the project:

cat << EOF | kubectl --kubeconfig kubeconfig --namespace default apply -f -

apiVersion: batch/v1

kind: Job

metadata:

name: wif-demo

labels:

app: wif-demo

spec:

ttlSecondsAfterFinished: 100

template:

metadata:

labels:

app: wif-demo

spec:

restartPolicy: Never

serviceAccountName: workload-identity-demo

containers:

- name: wif-demo

image: ghcr.io/frezbo/gcp-workload-identity-federation-demo:0.1.1

env:

- name: GOOGLE_APPLICATION_CREDENTIALS

value: /var/run/secrets/tokens/gcp-ksa/google-application-credentials.json

- name: GOOGLE_CLOUD_PROJECT

value: "${PROJECT}"

volumeMounts:

- name: gcp-ksa

mountPath: /var/run/secrets/tokens/gcp-ksa

readOnly: true

resources: {}

volumes:

- name: gcp-ksa

projected:

defaultMode: 420

sources:

- serviceAccountToken:

path: token

audience: "//iam.googleapis.com/${OIDC_PROVIDER_URL}"

expirationSeconds: 172800

- configMap:

name: sts-config

optional: false

items:

- key: "config"

path: "google-application-credentials.json"

EOF

We can check the logs by running the following command:

kubectl \

--kubeconfig kubeconfig \

--namespace default \

logs \

-l app=wif-demo

If everything is working fine, you should see the list of all buckets in the GCP project:

References:

- https://cloud.google.com/iam/docs/configuring-workload-identity-federation#gcloud

- https://cloud.google.com/iam/docs/workload-identity-federation#impersonation

- https://cloud.google.com/anthos/multicluster-management/fleets/workload-identity

- https://github.com/salrashid123/gcpcompat-oidc

- https://github.com/frezbo/irsa-anywhere

Cleaning up

gcloud projects remove-iam-policy-binding \

"${PROJECT}" \

--member "serviceAccount:wif-demo-sa@${PROJECT}.iam.gserviceaccount.com" \

--role roles/storage.admin

gcloud iam service-accounts delete \

"wif-demo-sa@${PROJECT}.iam.gserviceaccount.com" \

--quiet

gcloud iam workload-identity-pools delete \

workload-identity-demo \

--location="global" \

--quiet

Once the above steps are completed further cleanup can be done as described in the cleanup section of Talos docs.